E-Commerce & Entertainment(VOD)

Redefined! Go Agile,Headless & Scalable.

Headless Ecommerce to Revolutionize Your Online Sales. Video on

Demand Platform to Stream Your Content Smooth.

Services overview

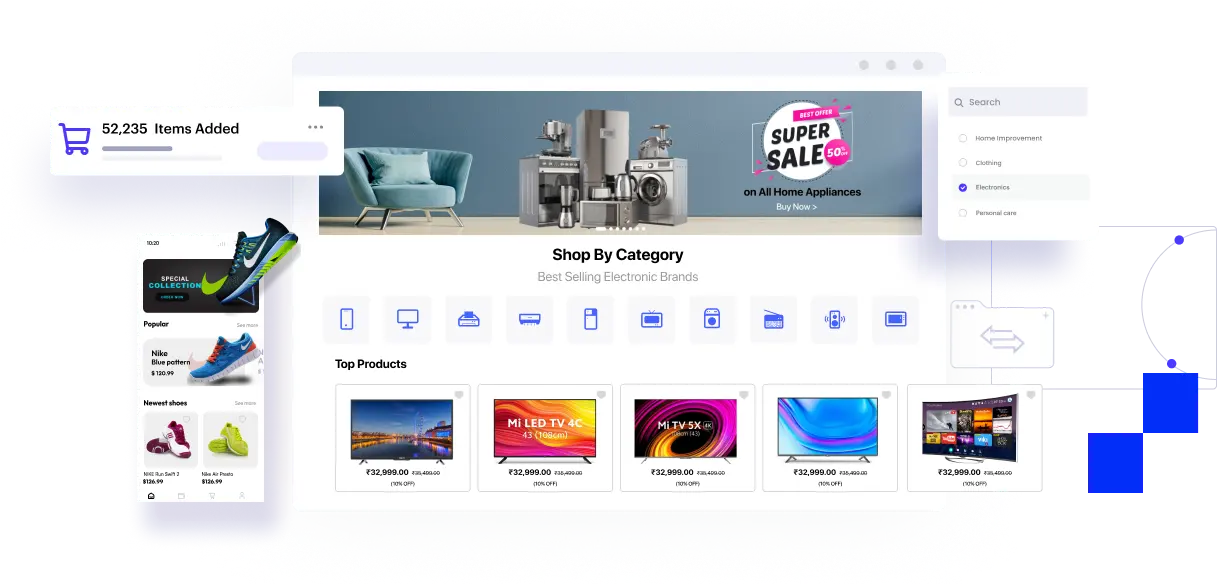

Headless Ecommerce:

Now it’s easy to setup, manage, and sell across infinite channels with an Enterprise-level omnichannel experience. Be faster to the market with APIs to create a dynamic and differentiable shopping platform.

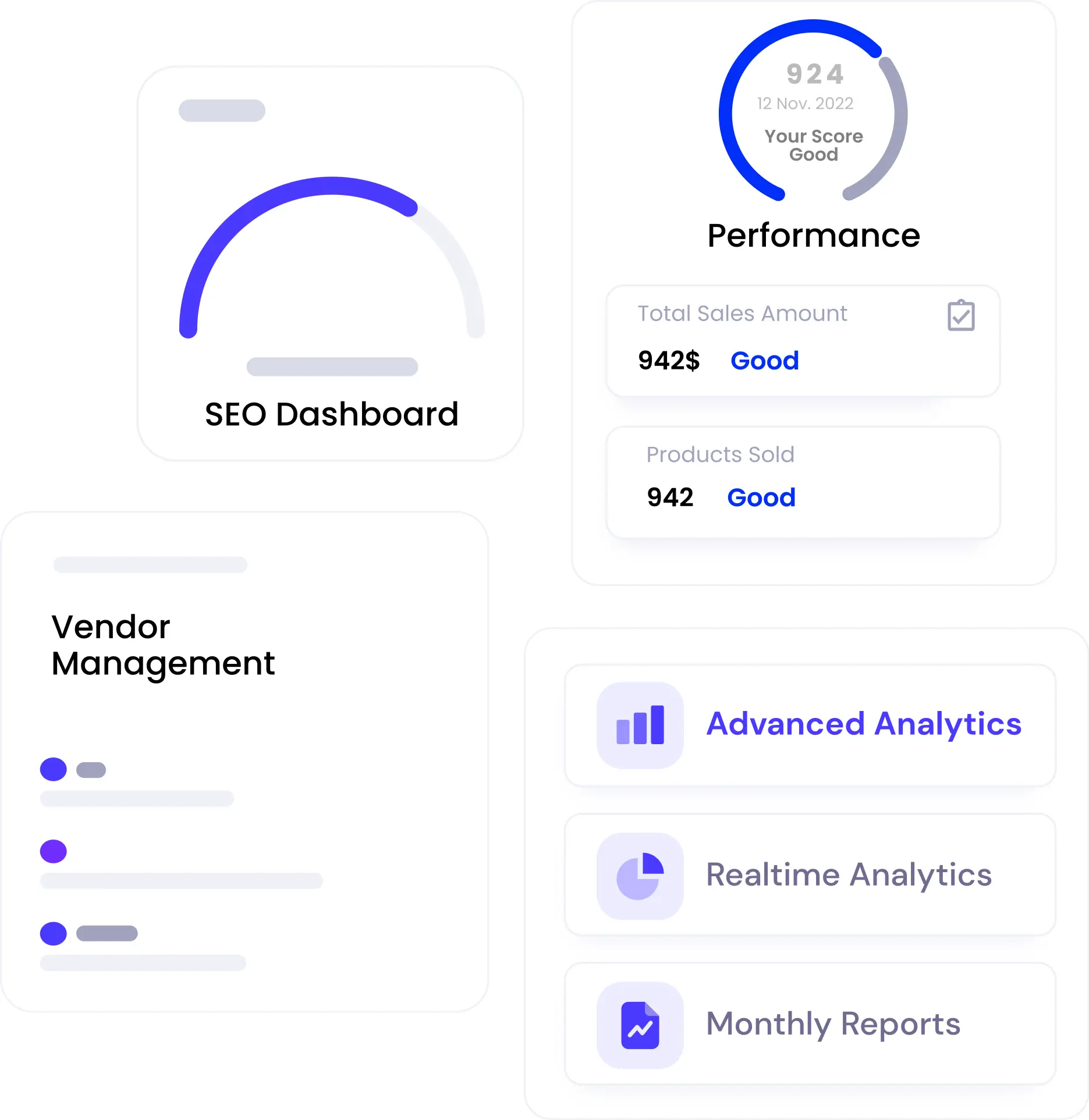

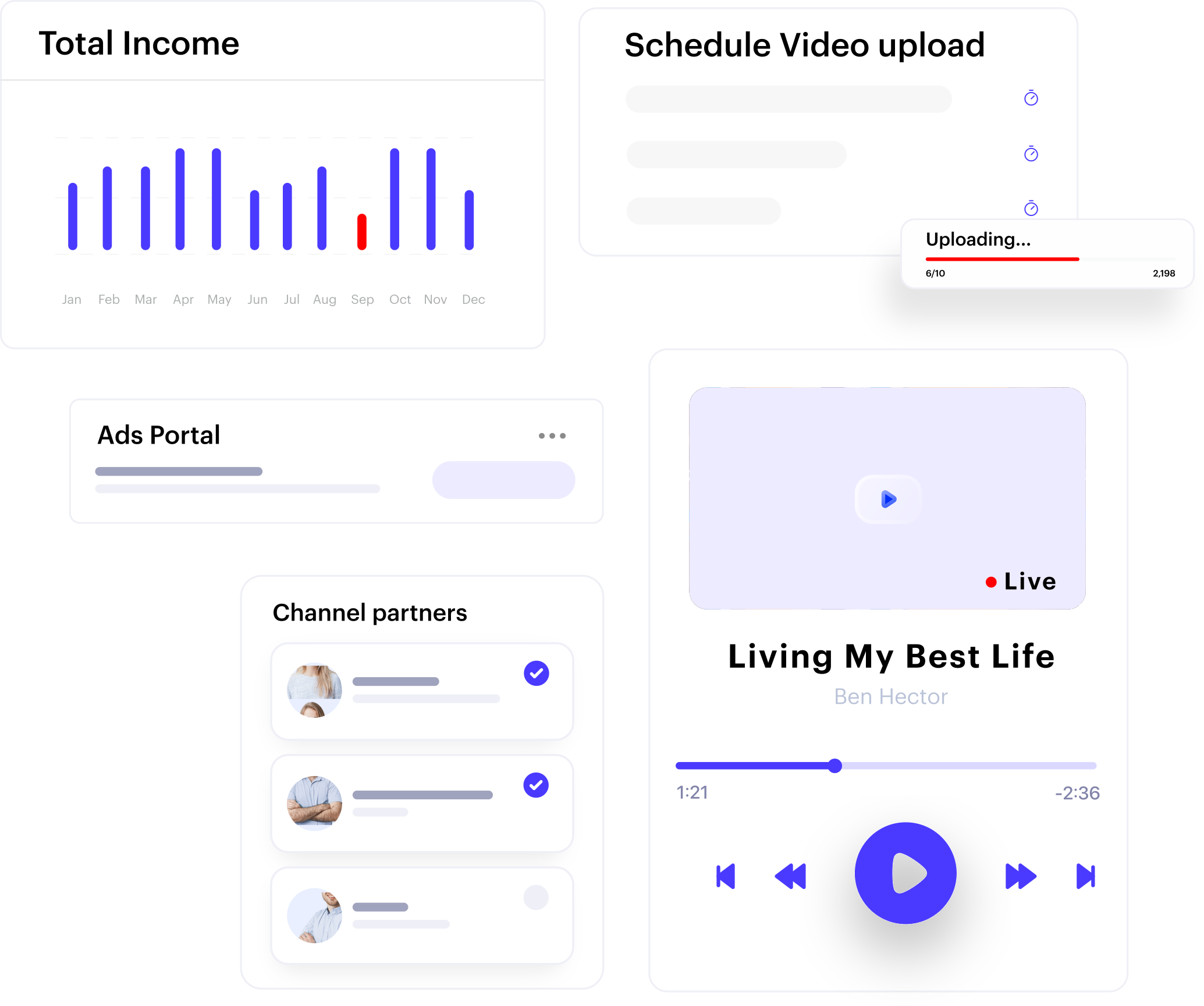

Services overview

Video On Demand &

OTT Platform:

Unlock Endless Possibilities with Our

High-Quality Video On Demand Solution,

Go Headless, and Achieve Infinite

Scalability!

.webp)